Understanding 3D Seismic Data Processing

Estimated reading time: 4 minutes

3D Seismic Data Processing

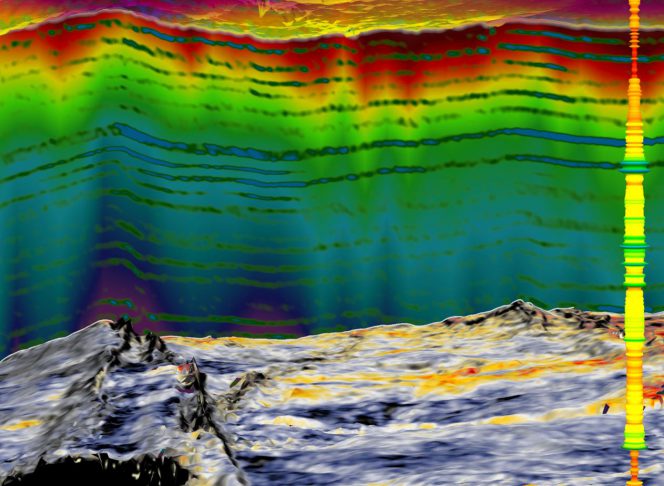

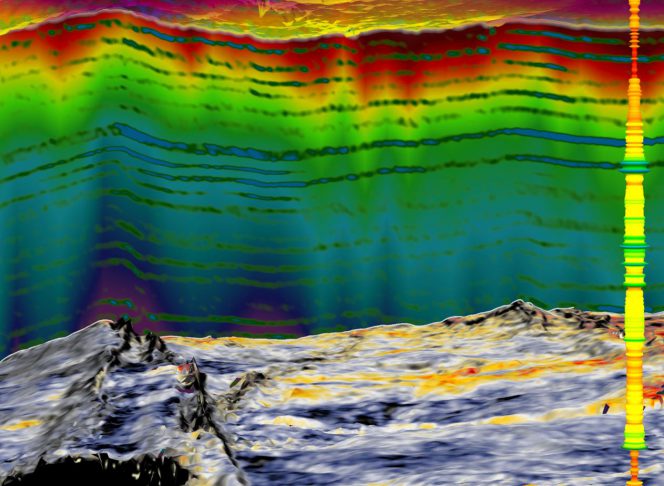

Seismic data processing is a complex process that comprises of several elements that contribute to its outcome. By definition, seismic data processing refers to the analysis of recorded seismic signals to filter unwanted noise thus creating an image of the subsurface to enable geological interpretation. The goal is to obtain an estimate distribution of material properties in the subsurface.

Processing of Seismic Data

After acquiring the seismic data, the data is recorded in digital form by each channel of the recording instrument and is represented by a time series. Processing algorithms are designed for and applied to either single channel time series, individually or multichannel time series.

The Fourier transform establishes the foundation of digital signal processing applied to seismic data. Aside from sections on the one- and two-dimensional Fourier transforms and their applications, fundamentals of signal processing also include a section on a worldwide range of recorded seismic data. By referring to the field data examples, geophysicists or geologists examine the characteristics of the seismic signal — such as primary reflections from layer boundaries and random and coherent noise such as multiple reflections, reverberations, linear noise associated with guided waves and point scatterers. Fundamentals of signal processing concludes with a section on the basic processing sequence and guidelines for quality control in processing.

What is 3D Seismic Data?

3D seismic data is a set of numerous closely-spaced seismic lines that represent a high spatially sampled measure of subsurface reflectivity. Typical receiver line spacing can range between 300 m to 600m, and typical distances between shotpoints and receiver groups is 25m (offshore and internationally) and 34m to 67m for onshore. Bin sizes are commonly 25m.

The resultant data set can be “cut” in any direction but still display a well sampled seismic section. The in-lines, or the original seismic lines while the crosslines are the lines displayed perpendicular to in-lines.

In a properly migrated 3D seismic data set, events are placed in their proper vertical and horizontal positions, providing more accurate subsurface maps. This is in comparison to 2D seismic lines which are more widely spaced, between which significant interpolation might be necessary. Moreover, 3D seismic data provide detailed information about fault distribution and subsurface structures. Hence, computer-based interpretation and display of 3D seismic data allow for more thorough analysis than 2D seismic data.

Limitations to the Processing of 3D Seismic Data

The most common problem when handling seismic data processing is the consistency of results. With the same raw data, the results of processing by one organisation most likely differs from the next organisation. The significant differences are in frequency content, S/N ratio, and degree of structural continuity from one section to another. These differences often stem from differences in the choice of parameters and the detailed aspects of implementation of processing algorithms. For example, all the contractors have applied residual statics corrections. However, the programs each contractor has used to estimate residual statics most likely differ in the handling of the correlation window, selecting the traces used for crosscorrelation with the pilot trace, and statistically treating the correlation peaks.

Another key aspect of seismic data processing is the generation of artifacts while trying to enhance signal. A good seismic data analysis program not only performs the task for which it is written, but also generates minimum numerical artifacts. One of the features that makes a production program different from a research program, which is aimed at testing whether the idea works or not, is refinement of the algorithm in the production program to minimise artifacts. Processing can be hazardous if artifacts overpower the intended action of the program.

|

3D Seismic Data Processing is a 3-day training course held from 26-28 February 2020 (Kuala Lumpur). The objective of the course is to create and then develop an understanding of the theoretical background of seismic data processing algorithms, Quality Control and Quality Assurances for each processing step to ensure having optimally seismic data product from seismic data quality perspectives. The course will cover all seismic processing workflow to having an amenable seismic data interpretation, with case studies from onshore, transition zone and offshore seismic surveys.

|